Introduction

From the outset, deliberative theory has sought to understand and address legitimation problems in democracy (Barber 1984; Cohen 1989; Habermas 1975), and some recent reformulations of deliberative theory have aimed to make this legitimating function more explicit (Curato & Böker 2016; Richards & Gastil 2015; Schwartzberg 2015). Since the advent of deliberative theory, however, institutional legitimacy remains a global challenge. From Europe to Australia, younger generations have grave doubts about whether democracy is ‘essential’ any longer, and the only countries in which public opinion has moved against strong authoritarian leaders are those with fresh memories of authoritarianism, such as Pakistan or Belarus (Foa & Mounk 2017).

Since this special issue examines the relationship between deliberation and digital technology, it is important to theorize how digital platforms might address the legitimacy problem that still plagues modern democracies. Considerable research, including contributions to this issue, shows how digital tools can exacerbate problems in democracy, such as through personalizing information streams to reinforce biases and tribalism (e.g., Sunstein 2017). If harnessed effectively for more deliberative purposes, however, digital technology could serve more as a brake than as an accelerator for civic decline.

Many efforts to design online discussions have aimed, explicitly or otherwise, at steering the public toward a more deliberative mode of citizenship and governance (Coleman & Moss 2012) as a means toward boosting system legitimacy (Gastil & Richards 2017). Preliminary assessments suggest that such efforts may prove effective (Patel et al. 2013; Simon et al. 2017), but the move toward online systems of public engagement certainly provides deliberation scholars an exceptional research opportunity. Systemic theories of deliberation (e.g., Parkinson & Mansbridge 2012) currently lack a viable means of testing the most complex relationships among variables operating at different levels of analysis and across vast social scales. The creation of an integrated online system linking citizens and policymakers could provide streams of longitudinal data that make possible the inspection of each link in the chain from citizen participation to system legitimation.

Thus, I use this special issue as an opportunity to develop a testable empirical model of how deliberation could generate legitimacy for public institutions through an online civic engagement system. I start by justifying the study of online consultation systems and provide a concrete illustration of such systems with an example from Spain (Peña-López 2017a). I then present the theoretical model and specify sets of working, or preliminary, hypotheses regarding, in turn, public participation, deliberative quality, decision quality, government responsiveness, and institutional legitimacy. In the concluding section, I highlight the implications of this model for deliberative theory, research, and practice.

Justifying the Investigation of Online Deliberation

Before laying out my empirical model of institutional legitimacy, I will argue for why it is crucial to study the kind of online consultation systems that make my empirical model testable. A longstanding problem in public consultation and civic engagement is sustaining the connection between the convener of public events and the public invited into such spaces. Although the past few decades have seen a proliferation of models for deliberative public engagement (Gastil & Levine 2005; Nabatchi et al. 2012), these cases are typically one-off interventions or a weakly linked schedule of events that make it difficult for participants and governments to follow up with each other. Although the notion of a deliberative system endeavors to map the linkages among media, public events, institutions and informal encounters (Parkinson & Mansbridge 2012), in practice such connections are intangible for residents of a complex city, let alone a state or nation.

Digital platforms for ongoing public consultation and civic engagement have the potential to sustain connections between people and their elected or appointed officials in a democratic system. Even some skeptics recognize this. In #Republic: Divided Democracy in the Age of Social Media, Sunstein (2017) noted the hazards that an increasingly digital life poses. We are becoming more tribal in our politics, as our information streams become personalized to reinforce biases and deprive us of common points of reference. Even so, the remedy Sunstein glimpses on the horizon is not less online activity but a richer information environment, which draws citizens into deliberative spaces and spontaneous encounters with diverse opinions.

Theorists of both democracy and digital technology have tried to envision a harmonious marriage between the two concepts (e.g., Barber 1984; Becker & Slaton 2000; Henderson 1970). The most ambitious theories of digital civic innovation have envisioned complex online spaces where citizens can learn, create, debate and vote together on everything from social issues to policy and budget items (Barber 1984; Becker & Slaton 2000; Davies & Chandler 2012; Gastil 2016; Muhlberger & Weber 2006).

In partial ways, such systems are already coming online (Noveck 2018), and one example that stands out operates in Spain (Peña-López 2017b; Rufín et al. 2014). Multiple municipalities have used the open source software called ‘Consul’ to create civic engagement opportunities, from participatory budgeting exercises to debates and policy consultations (Peña-López 2017a; Simon et al. 2017; Smith 2018).

For the purpose of this article, it is enough to describe the basic features of Consul’s online platform, with some small modifications to show its potential as an ongoing system of public deliberation.1 Although customizable for many other purposes, Consul’s design includes core elements, which I highlight in italics. I describe these in terms of a city government and its constituents, some subset of whom choose to participate in the consultation process. Although Consul serves as a useful illustration of online public consultation, it warrants noting that it does not incorporate every aspect of the theoretical model described herein.

Within Consul, a citizen proposals feature lets participants submit policy proposals. Those ideas that get support from a low threshold of other participants (e.g., 1 percent) become subject to a vote of all participants, with majorities required to send the idea forward for further review. That same vote tool can evaluate proposals that come directly from the city or those that emerge from a collaborative legislation process (akin to crowdsourcing mixed with comment and review). A participatory budgeting process combines these tools, with an intermediate step where city officials (and/or nongovernmental organizations) sift out nonviable budget items. Finally, the system permits the creation of more open debates, which need not tie back to particular policy proposals or budget ideas. Beneath all these processes lie other useful infrastructures, such as a secure registration system and verified user accounts (at least for political representatives).

With more than a dozen Spanish cities—and a roughly equal number across Latin America—using Consul, it could end up spreading as widely and rapidly as participatory budgeting itself. Just as that budgeting process has remained open to researchers (e.g., Gilman 2016; Wampler 2007), digital innovators may also permit data access sufficient to track participants’ experiences in a digital consultation system over time. Such research, however, would need to be as publicly accountable as the platform itself, the development of which has been more democratic than that of the commercial consultation systems more widely adopted (Smith 2018).

For the purpose of this illustration, imagine that every user’s digital footprints could be traced from their first use to their ongoing contributions to their process evaluations. Those data would stand alongside parallel records for city officials using the software and responding to participant recommendations and decisions. With careful anonymization of open-ended text (e.g., from chats and discussions) and user identification numbers masking individual identities, researchers could assess the net contribution of discrete forms of participation to long-term changes in government behavior and participants’ civic attitudes. The following section offers a model for organizing such data to test a chain of hypotheses about the potential of deliberation to bolster legitimacy for public institutions.

Modeling a Feedback Loop Generating Institutional Legitimacy

The model I propose uses feedback loops to show how deliberation stands at the center of a continuous process that leads from increased public participation to heightened legitimacy for public institutions—and back again. Before illustrating that model, it warrants noting that this bears some resemblance to other theories that take a similar approach, including the self-reinforcing model of deliberation (Burkhalter, Gastil & Kelshaw 2002) and the symbolic-cognitive proceduralism model (Richards & Gastil 2015). The former model focused on how individual cognitive variables promoted deliberation (e.g., the perceived appropriateness of such a process), then became reinforced as a result (e.g., via the formation of deliberative habits). The latter model moved across levels of analysis to show, for instance, how a specific deliberative event with high process integrity might spark mass public demand for governments to offer more such opportunities.

Given the aforementioned variety of variables associated with deliberation, one could devise a nearly infinite variety of such feedback loops. The model I propose reflects practical choices about what matters most for contemporary democratic systems—rendering sound policy choices and gaining institutional legitimacy. It focuses on the kind of participation and deliberation opportunities most likely to be available through an online system such as Consul.

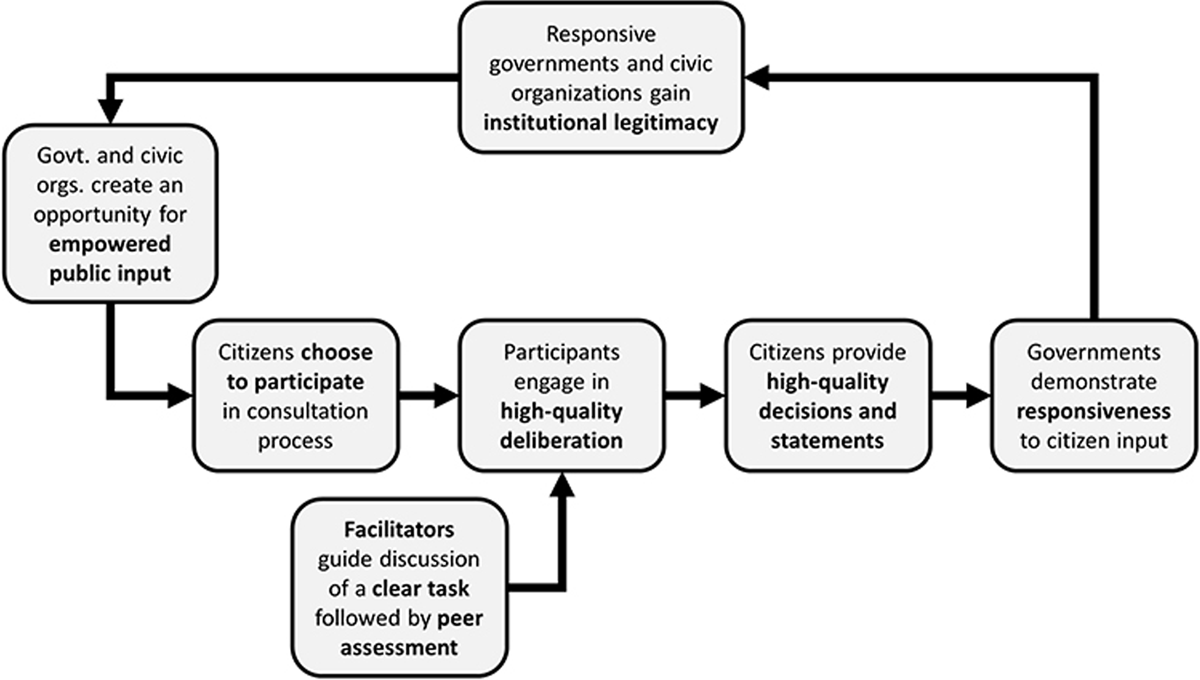

Figure 1 summarizes the model, which links variables or—in most cases—sets of variables. Because the model is circular, each of the variables become, at one time or another, a focal dependent variable. Overall, the model aims to show how opportunities for empowered public engagement—created or co-created by governments and civic organizations—can engender democratic legitimacy and sustain public participation. More precisely, the model lays out working hypotheses that predict the effects of different variables on public participation, the quality of deliberation that ensues, and the quality of decisions (or recommendations) that participants render. At the back end of the model, quality decisions influence the level of government responsiveness, which triggers legitimacy perceptions (for both the civic organizations and the government institutions involved). In turn, that heightened legitimacy might lead civic and government actors to create more opportunities for public engagement in the future.

At this level of abstraction, the model in Figure 1 could describe engagement processes that happen offline as well as online. Nevertheless, since an online system such as the Consul platform described earlier could prove crucial to completing the model’s central feedback loop, I will show how civic technology has a role to play for each linkage within this model.

Working Hypotheses Derived from the Model

In this section, I specify the hypothesized relationships in the model that warrant statistical testing. (I call these ‘working hypotheses’ because they stand at a higher level of abstraction than that a specific test might require.) Table 1 summarizes these hypotheses and the variables they require for measuring. These hypotheses do not capture the full set of factors that influence a given variable. Rather, the point is to capture those variables integral to tracing paths through the model’s feedback loop, along with key influences on the quality of deliberation in the center of the model. If each of the hypotheses functions like a link in a chain, the whole feedback loop fails if a single link breaks.

Working hypotheses in a feedback-loop model of online public consultation.

| Hypotheses | Predictor variables … | … have a positive effect on dependent variables |

|---|---|---|

| H1 | Empowered opportunities Degree to which participants are invited to influence rules, policies, or budgets |

Active engagement

|

| H2 | Active engagement Task clarity Facilitation provided Peer assessment linked to incentives |

Quality of deliberation

|

| H3 | Quality of deliberation | High-quality decisions/recommendations

|

| H4 | High-quality decisions/recommendations Structural features Aspects of government and civic culture that promote or limit responsiveness |

Government responsiveness

|

| H5 | Government responsiveness | Institutional legitimacy

|

| H6 | Institutional legitimacy | Empowered opportunities |

H1: Predicting active engagement

Dependent variables. The first set of hypotheses focuses on active participation in a public process. I break participation into two parts—a willingness to participate among those invited and the level of active engagement among those who participate. The first of these concerns the rate at which a process attracts those who are invited to participate. Response rate of a process varies widely based on variables external to this model, such as providing payment for participation (Crosby & Nethercutt 2005) and intensive recruiting efforts (Fishkin 2009). Some online processes have a cap on the number of persons who can attend (e.g., if it involves a finite resource, such as trained facilitators), so this first variable is measured as the percentage of valid contacts (i.e., subtracting bounced email addresses) yielding an affirmative willingness to participate.2

A second participation measure recognizes that among those who take part in a public event, there are widely varying rates of active engagement. Intensively facilitated deliberative events, such as the Australian Citizens’ Parliament, can increase the equality of participants’ engagement over the course of several days—but come nowhere near equalizing engagement levels (Bonito et al. 2014). Because one can remain cognitively active during a process without speaking as often as others, it is important to augment external measures of verbal participation rates with a psychometric scale measuring ‘deliberation within’ (Weinmann 2018).

Predictor variables. People choose to participate in politics and public affairs for myriad reasons, including time, money, and civic capabilities (Brady, Verba & Schlozman 1995). That said, a public engagement program can attract participants if it constitutes an empowered opportunity. What makes such opportunities most compelling is the chance for real influence, such as through participatory budgeting (Gilman 2016; Wampler 2007), civil and criminal juries (Gastil et al. 2010), or local councils (Barrett, Wyman & Coelho 2012; Coelho, Pozzoni & Montoya 2005). More modestly, Neblo (2015) found that many citizens, particularly millennials, want a more compelling online experience of public engagement, even if only to meet online with a public official. By contrast, forums that provide little chance for influence have equally miniscule odds of sparking interest among disengaged citizens (Dunne 2010).

How does one measure the degree to which an engagement opportunity represents one that empowers citizen participants? A rank-ordered empowerment scale could have at its top those processes that have binding authority over a public good, whether this is budget resources, policy decisions, legislative proposal, or valuable message content, as in the advisory statements the Citizens’ Initiative Reviews write for mass distribution to the electorate (Johnson & Gastil 2015). A secondary level of empowerment would be a process that offers participants a reasonable expectation of influence on such matters, as in Deliberative Polling (Fishkin 2009) and Citizens’ Juries (Crosby & Nethercutt 2005; Smith & Wales 2000). The third level would simply involve meeting with public officials or their agents, as in conventional public hearings and board meetings—but without an expectation of influence, as is common for gatherings that permit only limited discussion and make no pretense of participant influence or equality (Fiorino 1990; Gastil 2000). Finally, the lowest level of empowerment—a score of zero on this scale—convenes citizens without granting any direct access or connection to public representatives, as is common in online forums in which citizens are little more than indirect ‘information providers’ for a government agency (Dunne 2010).

H2: Predicting deliberative quality

Dependent variables. A surplus of definitions exist for defining deliberation, ranging from precise coding metrics for argument quality (Steenbergen et al. 2003) to subjective observer assessments, such as the ‘free flow’ of ideas (Mansbridge et al. 2006). I do not attempt to resolve conceptual and measurement disputes about deliberation but opt instead to stress what I consider the two primary dimensions—the analytic rigor of a discussion and the democratic quality of the social exchanges that occur during such discussions (Gastil 2008).

In a review of case studies compiled in the Participedia.net archive, these two dimensions were subdivided into the breadth and depth of substantive issue analysis, on the one hand, and the consideration given to others’ ideas and the respect given to other individuals during deliberation on the other hand (Gastil et al. 2017). Neutral third-party coders made those assessments based on the materials available in the case write-ups, and third-party ratings also have proven reliable using transcripts for face-to-face discussions (Gastil, Black & Moscovitz, 2008) and more complex threaded discussions in online work groups (Black et al. 2011).

Predictor variables. I begin with a predictor—level of active participant engagement—that I defined in the previous section. Because this model follows a loop, I will flip dependent variables into predictors in each new section, because their role changes as hypotheses move forward through the chain of cause and effect.

What requires justification in this and all cases, however, is predicting that this variable has a causal effect on a new dependent variable. In this case, active engagement should prove vital to deliberative quality because the deliberative process depends on both cognitive and verbal contributions from participants. Across a wide range of deliberative processes, design elements aim to elicit active participation precisely for this purpose (Gastil & Levine 2005). More than once, however, researchers of hybrid processes have found greater inequality of participation online versus subsequent face-to-face processes (Showers, Tindall & Davies 2015; Sullivan & Hartz-Karp 2013). Widespread participant disengagement decreases the likelihood of hearing a full breadth of perspectives, while also limiting the argumentative depth of discussion. Meanwhile, disengaged participants may be less likely to feel that their perspectives have been heard, or respected—a problem that manifests itself in majoritarian bodies that foreclose opportunities for minority opinion, or even dissent (Gastil 2014; Geenans 2007).

The remaining predictors represent the only set of variables that stands apart from the main flow of cause and effect in the model. I hypothesize that these structural features of an online public consultation system give it the capacity to generate high-quality deliberation, but no other model variables have a reciprocal effect on them. Moreover, this structural set is more illustrative rather than exhaustive. I include in the model only three variables (task clarity, facilitation, and peer assessment), but ongoing testing will clarify which of these—or other—structural features best promote deliberation.

Two structural features—task clarity and facilitation—come as a pair common to deliberative process regardless of whether conducted online or face-to-face. Many of the most longstanding deliberative consultation processes have stressed the importance of a clear ‘charge’ for a body, which gives citizens both a topical focus and a well-defined purpose, and Citizens’ Juries, Planning Cells, and Consensus Conferences all place a premium on professional group facilitation (Crosby & Nethercutt 2005; Hendriks 2005). The Oregon Citizens’ Initiative Review illustrates this most clearly because it asks two dozen citizens to spend their 3 to 5 days together scrutinizing a specific ballot measure. A team of facilitators help the panelists work through complex issues so that the citizens can write a one-page issue analysis for the full electorate to read (Knobloch et al. 2013).

As for measuring these factors, a subjective scale could measure task clarity both before and after deliberation to ascertain whether a complex deliberation functions well enough so long as participants get up to speed during the process itself. Published work on deliberative facilitation suggests using a rank-order approach—strong, weak, or no facilitation (Dillard 2013), although more subtle measures of facilitation style should be used as available.

A predictor that could be crucial in an ongoing consultation system asks participants to assess one another after each deliberation. I call this peer assessment linked to incentives to capture a wide range of potential social-rating methods, so long as those give participants a reason to both desire favorable ratings and give accurate ratings. Online peer rating systems, such as those used in eBay and Uber, have proven themselves as effective accountability mechanisms that build confidence and trust between buyers and sellers (Lee 2015; Thierer et al. 2015). Dystopian fiction, however, cautions us against how such systems could go awry, as in the Black Mirror episode ‘Nosedive,’ in which social rating cascades lead to social isolation. In China’s authoritarian system, this scenario is well underway, with the government licensing ‘eight private companies to come up with systems and algorithms for social credit scores’ (Bostman 2017).

The promise and hazards of such systems suggest experimenting with a variety of social designs. For the purpose of predicting deliberative quality, such a system should ask participants to rate one another on precisely the same dimensions used to assess deliberation itself—breadth, depth, consideration, and respect. Non-obvious questions might touch on underappreciated social roles. For example, a rating question might ask which participants helped spark the most substantive disagreement—a necessary part of deliberation that can make participants uncomfortable (Esterling, Fung & Lee 2015; Zhang & Chang 2014). Or, which participants actively encouraged quieter members to speak up? To encourage more accurate ratings, random third-party spot checks could be compared against participants’ subjective assessments to amplify high-fidelity ratings and discount ones that appear punitive or disingenuous. Beyond these suggestions, possibilities for deliberative peer assessment abound (Black et al. 2011; Chang, Jacobson & Zhang 2013; Steenbergen et al. 2003).

As for transforming those ratings into something that has value within the system, many approaches could prove feasible. A reputation system by itself might be enough, given participants’ desire to receive favorable marks from their peers, but a ‘gamified’ approach could provide more powerful incentives (Chou 2015; Lerner 2014). An online system could offer within-system opportunities for leveling-up toward greater levels of opportunity (and responsibility) within the system (Gastil 2016). Or, there could be external incentives—even monetary ones—for making valuable contributions to deliberation, analogous to the rewards given in ‘forecasting tournaments’ (Tetlock et al. 2014). Whichever form such incentives take, the point is to tie them as directly as possible to peer assessments of contributions to the deliberative process—as opposed to other socially desirable behaviors.

H3: Predicting decision quality

Dependent variables. Aside from the indirect benefit of legitimacy, a key rationale for involving lay citizens in governance is improving public policies themselves (Dzur 2008). Thus, at the center of my model lies the quality of the recommendations, decisions, and other substantive outputs that come from a citizen deliberation (hereafter called simply ‘decisions’). Measuring the quality of decisions, however, presents problems both practical and philosophical. Critics have pointed out that deliberation cannot justify itself in terms of the decisions it yields because there exists no independent ground from which one might judge the public value of any decision (Ingham 2013).

Even so, there are two approximations that should suffice. First, one can measure the level of participant support for decisions/recommendations (e.g., Paul, Haseman & Ramamurthy 2004), because participants in a deliberative process can end up varying in their assessment of their own decisions. Evaluative disagreement occurs even on juries that use a unanimous decision rule (Gastil et al. 2010), and there is reason to be concerned about the quality of decisions groups render that even their members are reluctant to support.

That said, a deliberative body may fall victim to self-congratulatory assessment of its decisions, so a third-party judgment is warranted. After removing the subjective question of whether the group’s decision is desirable, in terms of a public good, criteria suitable for expert judgment are judgments about a decision’s feasibility; the sufficiency of its rationale; and its recognition of drawbacks, tradeoffs, and dissenting viewpoints. The research team studying the Oregon Citizens’ Initiative Review has made similar assessments of the one-page statements written by citizen panelists (Gastil, Knobloch & Richards 2015), but no inter-rater reliability data exist for such a measure.

Predictor variables. Epistemic theories of democracy presume a direct link from deliberative quality to decision quality, marshalling a good deal of logic and evidence supporting that claim (Estlund 2009; Landemore 2013). Thus, I draw a direct connection to decision quality from the foreshortened conception of deliberation I provided earlier—breadth/depth plus consideration/respect.

As to the first part of this hypothesis (breadth/depth), evidence from small group research suggests that more extensive and in-depth information processing yields higher-quality decisions (e.g., Peterson et al. 1998). As to the social dimension (consideration/respect), notice that consideration amounts to weighing conflicting views (i.e., substantive conflict), whereas respect is the absence of personal conflicts. Decision-making groups thrive precisely when they have the necessary substantive clash without the interpersonal drama (Gastil 1993). This is particularly important in an online context, where participants can be less likely to engage in ‘positive conflict management’ (e.g., respectful and substantive analysis of divergent views) than in face-to-face settings (Zornoza, Ripoll & Peiro 2002).

H4: Predicting government responsiveness

Dependent variables. A government agency or public official may be reluctant to solicit public input for fear that citizens will want something infeasible or undesirable. Such an eventuality puts the policymaker in the awkward position of rejecting a recommendation from constituents convened precisely to exert influence over a government decision. Government responsiveness, however, is not the same as mere acceptance. For example, a genuinely responsive public agency should be able to give a direct and thoughtful explanation for why it cannot act on a recommendation. In a sense, such a reply might count as more responsive than an agency that simply accepts a public statement, without comment, and acts on it.

Measurement of responsiveness has further complications. How does one measure the ‘response’ of a government when the public’s decisions have a legal force all their own—independent of government action? Does it matter how quickly a government responds, or does a government get credit for taking more time to give a precise reply? Do downstream policy implications matter, such as when a promise to act on advice goes unfulfilled, or when hasty action in response to a recommendation yielded a policy outcome worse than the status quo?

Conventional measures of responsiveness get around these problems by measuring whether the people get what they say they want. In one nondeliberative approach, a government scores as responsive if it produces public policies that—when coded by experts—align with survey measures of surface-level public opinion (Erikson, Wright & McIver 1993). That method also has been used to judge whether direct democracy (i.e., ballot initiatives) produce laws more responsive to the public’s values than do electoral democracy (Matsusaka 2004). A similar approach compares public preferences with a government’s policy promises and its budgetary decisions (Hobolt & Klemmensen 2008).

Translating those approaches to the present context, the public’s more deliberative judgments can stand in for any indirect measure of its preferences. That is, whatever the public decides to recommend represents its considered opinion on a matter (Fishkin 2009, 2018; Mathews 1999; Yankelovich 1991). Expert coders can then judge the alignment of the text and policy responses from a government with the public’s decision. Stopping there, however, would deem any disagreement as unresponsive, so a second coding should judge the response on two more dimensions—directness and rationale. A direct response addresses the substance of the issue in question and the public’s decision (vs. a more strategically ambiguous or oblique response). A strong rationale is one that receives a high argument score on a Discourse Quality Index (Steenbergen et al. 2003) for providing reasoning, evidence, and a clear expression of underlying values. To capture the public’s subjective sense of responsiveness, participant surveys can tap into the same two dimensions to discern whether they thought the response constituted a direct and substantive response.

Two special—but common—cases, however, require supplementary measures of responsiveness. First, when a government’s response comes later than promised, or expected, a question of timeliness arises. If citizens recommend establishing a review board for a police department, for example, how does one assess a government response if one is not forthcoming even months after the conclusion of a public consultation? In such a case, the researcher has missing data: There are no government documents to code (other than a budgetary allocation of zero), and surveys have no focal government action for participants to assess. In such a case, one may be forced to enter a score equivalent to a zero to indicate total nonresponsiveness.

The second special case is when the public consultation is empowered fully—with a final decision pulling a policy or budgetary trigger. When this is the case, expert judgments of alignment, directness, and rationale all appear unnecessary, and a survey assessment would have to carefully phrase its questions lest they instead measure broader public attitudes toward government (such as legitimacy, discussed below). One reasonable option would simply score these cases as maximally responsive.

Predictor variables. One might take for granted that better decisions yield a favorable government response. After all, under good conditions this stands as the very purpose for consulting the public (Dzur 2008). Such a direct relationship between decision quality and responsiveness did not appear, however, in a statistical analysis of a large sample of public participation cases (Gastil et al. 2017). Even so, there exist numerous examples of favorable government responses to clear guidance (Barrett et al. 2012; Johnson & Gastil 2015). Deliberative Polling, for example, has elicited rapid and direct responses in contexts as far apart as the Texas public utilities and Chinese municipal governments (Fishkin 2009, 2018; Leib & He 2006).

Moderating this relationship are a set of systemic variables that characterize a government body’s default responsiveness to democratic input. At one extreme, He and Warren (2012) discuss the prospects of deliberation in China, where an authoritarian government welcomes public input on particular issues and with strict limits on the range of alternatives considered. One could not expect a Chinese municipal government to respond favorably to any proposal that contradicted central party dictates, but this counts as an extreme example along a continuum of governments that may be more or less responsive.

In the context of a multiparty political system, a key to responsiveness may be the government’s perception that its political fortunes require such action owing to the presence of an independent media system, a vibrant civic culture, and an engaged public (Besley & Burgess 2002; Cleary 2007). Governments also vary in their capacity to respond, depending on the distribution of authority across different levels of government and the restrictions placed on government by ordinances, charters, constitutions, and other laws (Rodrik & Zeckhauser 1988). Finally, the civic attitudes and habitual practices of public officials will predict responsiveness, because only some public officials view public input as vital to their jobs (Dekker & Bekkers 2015; Dzur 2008).

H5: Predicting institutional legitimacy

Dependent variables. As much disagreement as there is about the precise meaning of deliberation, legitimacy has a longer history of conflicting conceptions and measures. One approach that fits with the conception implicit in the discussion thus far distinguishes behavioral from psychological variables as they relate to democratic political systems (Levi, Sacks & Tyler 2009). In this view, such a system has legitimacy if people comply with its laws and decisions. Underlying that willingness to comply are perceptions of procedural justice and trust in the government itself. If one’s leaders are trustworthy (and follow fair procedures), one can rest assured that the public institutions that put those leaders in place is legitimate.

Those psychological variables translate well to the context of an online deliberative consultation. Surveys could ask participants three questions about the government body that sponsored, hosted, or authorized the consultation. Is that body’s interest in public engagement genuine? Did it strive to ensure procedural fairness? Finally, does it aim to render final decisions in the public interest?

Asking those straightforward questions would constitute a departure from the more commonly used measures of legitimacy that ask about government more broadly. The most common tool is a four-item survey measure of ‘perceived system responsiveness,’ or ‘external efficacy’ distinguished from the internal variety (Niemi, Craig & Mattei 1991). Previous research has found that even a rewarding and empowered experience of deliberation can fail to move the needle on this abstract variable (Gastil et al. 2010; Knobloch & Gastil 2012). Part of the problem is that exceedingly high scores on this variable can reflect a kind of complacency, which clashes with the civic spark engendered by public engagement (Gastil & Xenos 2010). Although one might choose to include this variable to probe the long-term effects of ongoing consultation, I eschew this option in the name of parsimony.

In the model shown in Figure 1, I predicted that this legitimacy boost could come not only to government bodies but also to the civic organizations that organize deliberative events with them. Government sometimes contracts with such civic entities, or they cocreate processes (Kelshaw & Gastil 2008; Lee 2014; Nabatchi et al. 2012). Although changes in government legitimacy remain my principal focus, it is plausible that the public perceptions of civic partners in these efforts will move in parallel.

Predictor variables. I include in the model as predictors only the subjective measures of government responsiveness. Although governments might hope to receive direct credit for their responses, as viewed by neutral third parties, the public’s disaffection stems from a felt disconnection from government (Dzur 2008). The key predictor, then, is the subjective one, whereby the sense of receiving a direct response with a strong rationale reinforce citizens’ trust in the officials, public institutions, and civic organizations that welcomed their input.

This limited hypothesis both supports and challenges assumptions implicit in deliberative democratic theory. Many theorists hold that the legitimating function of deliberation comes from the open contestation of arguments and the sense of having one’s views heard (e.g., Gutmann & Thompson 2004). In this view, it should not matter whether a government’s decision aligns with one’s own preferences or with the substance of the recommendations given from an online deliberation. Rather, if the response is ‘genuine,’ it is legitimate. I take the same view by predicting no independent effect on legitimacy beliefs from the alignment of a government response with the public’s recommendations. At the same time, I depart from this most sanguine conception of deliberation in expecting participants’ subjective assessments of directness and rationale as paramount. I predict no independent effect from expert codings thereof.

This discussion of legitimacy might seem incomplete without including as predictors the quality of public deliberation and the decisions/recommendations that citizens reach. The model I summarized in Figure 1 includes these variables but posits that they only have indirect effect on legitimacy perceptions. Rather, deliberative quality influences decision quality, which leads to government responsiveness and consequent changes in legitimacy perceptions. A study fortunate enough to have all those variables measured in sequence can use structural equation modeling to test whether deliberation and decision quality also have direct effects on legitimacy ratings, aside from their effects through the main paths of the model.

H6: Predicting the emergence of more empowered opportunities for public engagement

At this point, I have specified every variable in the model, but two causal paths require justification. The first of these closes the main loop in the model, whereby creating an opportunity for empowered deliberation leads to more such opportunities in the future. One purpose of drawing out this model was giving a more precise formulation of this notion that public deliberation is a self-reinforcing process (Burkhalter et al. 2002; Richards & Gastil 2015).

The last link in this chain holds that heightened legitimacy perceptions are the proximate cause of government institutions and civic organizations providing more deliberative opportunities in the future. This claim has face validity in that governments turn to deliberative processes partly to bolster their public credibility (Fishkin 2009, 2018). Likewise, civic organizations that partner with governments rely on public trust and can be expected to reinitiate those processes that win them more credibility. Success, in the form of rising legitimacy ratings for the consulting agency, should therefore prompt repeated iterations of this curative process.

There are at least two reasons, however, to doubt the simplicity of that formulation. First, the model I propose dismisses the possibility of other direct links. One that stands out is decision quality. In those instances where government convened the public not to score legitimacy points but to render better public policy (Dzur 2008), decision quality might prove the more powerful direct predictor of whether that same government body convenes empowered deliberative processes in the future. Second, deliberation could be its own undoing if it proves so effective at eliciting public values and policy justifications that it leaves a consulting agency with no further uncertainties about the public’s views. In such a case, if the agency sought the input for its own sake, one or two empowered deliberative processes might suffice.

Conclusion

The foregoing discussion provided conceptual and operational details for six hypotheses that, taken together, theorize a complex linkage between deliberation and the legitimacy of public institutions. In this feedback-loop model, almost all the variables shown in one row of Table 1 as a dependent variable appear as a predictor variable in another row. Figure 1 shows how those loop back through each other such that effective deliberation can boost the legitimacy of public or civic organizations sponsoring deliberation, which in turn could spur future opportunities for more deliberation.

Although this model includes a plausible chain of interconnected variables, it does not include every evaluative variable associated with public deliberation. For example, the model considers the rate of public participation but not the demographic inclusiveness of such a process (Young 2002). Likewise, the model does not account for the equality within deliberation or its procedural transparency (Karpowitz & Raphael 2014). Such variables can and should be added to this model in the future.

The prospect of testing this model effectively hinges on the existence of a sustained system for online public consultation. To make that possible, many things must happen simultaneously. Most of all, an online consultation system needs to come into being that can incorporates all of these variables. Efforts like Consul hold great promise in this regard, but their implementation remains piecemeal and elections can bring into office new administrations who choose to discontinue such projects. Thus, the ideal system has a long-term financial commitment from nongovernmental and/or governmental bodies to keep the system’s code secure (to secure privacy), stable (to maintain functionality), and dynamic (to experiment with new features). Researchers who work with public entities directly in the development of such a system and build up mutual trust will have more success securing access to the system’s data and inserting into the system the survey tools necessary for testing hypotheses such as those enumerated in this essay.

Experimentation may proceed apace in one-off laboratory experiments, which might test one or another hypothesis in this model. Such studies, however, have grave problems when it comes to ecological validity. The overriding concern in this model is testing the proposition that ongoing and empowered deliberation can elicit meaningful government responses that create mutual trust between citizens and public officials to sustain the deliberative system itself. The meaning of any of those variables depends on the context in which they are measured: An isolated test of one binary relationship outside this context may yield little information about how those variables connect in the real context of a consequential online consultation system.

Many democratic governments find themselves in a delicate political moment. Their longevity may require a concerted effort to build stronger online civic spaces that incorporate deliberative tools, responsive policymaking, and permit direct citizen feedback. The fact that so many governments are experimenting with these processes is encouraging in that regard (Noveck 2018). Nongovernmental and academic partners could lend their expertise to that effort and leverage digital technology to secure more robust public deliberation, better government decisions, and an otherwise elusive institutional legitimacy.

Notes

- Specifications appear at the Consul site (consulproject.org/en) and in the pamphlets and other documents located there—especially its ‘dossier,’ which is a mix of mission statement, product brochure, case report, and user manual. Madrid has created an offshoot called Decide Madrid, but I refer to all uses of this toolset as simply ‘Consul’ because that platform has outlived the particular governments in Barcelona and Madrid that made its launch possible. ⮭

- This variable cannot be measured for processes relying exclusively on open-ended invitations distributed through Websites or advertisements. ⮭

Competing Interests

The author has no competing interests to declare.

Author Information

John Gastil (PhD, University of Wisconsin-Madison) is Distinguished Professor in the Department of Communication Arts and Sciences and Political Science at the Pennsylvania State University, where he is a senior scholar at the McCourtney Institute for Democracy. Gastil’s research focuses on the theory and practice of deliberative democracy, especially how small groups of people make decisions on public issues. The National Science Foundation has supported his research on the Oregon Citizens’ Initiative Review, the Australian Citizens’ Parliament, American juries, and how cultural biases shape public opinion. His most recent books are Hope for Democracy (Oxford, 2020), co-authored with Katherine R. Knobloch, and Legislature by Lot (Verso, 2019) with Erik Olin Wright.

References

1 Barber, B. R. (1984). Strong democracy: Participatory politics for a new age. Berkeley, CA: University of California Press.

2 Barrett, G., Wyman, M., & Coelho, V. S. P. (2012). Assessing the policy impacts of deliberative civic engagement: Comparing engagement in the health policy processes of Brazil and Canada. In T. Nabatchi, J. Gastil, G. M. Weiksner, & M. Leighninger (Eds.), Democracy in motion: Evaluating the practice and impact of deliberative civic engagement (pp. 181–202). Oxford: Oxford University Press.

3 Becker, T., & Slaton, C. D. (2000). The future of teledemocracy. New York: Praeger.

4 Besley, T., & Burgess, R. (2002). The political economy of government responsiveness: Theory and evidence from India. The Quarterly Journal of Economics, 117(4), 1415–1451. DOI: http://doi.org/10.1162/003355302320935061

5 Black, L. W., Welser, H. T., Cosley, D., & DeGroot, J. M. (2011). Self-governance through group discussion in Wikipedia: Measuring deliberation in online groups. Small Group Research, 42(5), 595–634. DOI: http://doi.org/10.1177/1046496411406137

6 Bonito, J. A., Gastil, J., Ervin, J. N., & Meyers, R. A. (2014). At the convergence of input and process models of group discussion: A comparison of participation rates across time, persons, and groups. Communication Monographs, 81(2), 179–207. DOI: http://doi.org/10.1080/03637751.2014.883081

7 Bostman, R. (2017, October 21). Big data meets Big Brother as China moves to rate its citizens. Wired. Retrieved from http://www.wired.co.uk/article/chinese-government-social-credit-score-privacy-invasion.

8 Brady, H. E., Verba, S., & Schlozman, K. L. (1995). Beyond SES: A resource model of political participation. American Political Science Review, 189, 271–294. DOI: http://doi.org/10.2307/2082425

9 Burkhalter, S., Gastil, J., & Kelshaw, T. (2002). A conceptual definition and theoretical model of public deliberation in small face-to-face groups. Communication Theory, 12(4), 398–422. DOI: http://doi.org/10.1111/j.1468-2885.2002.tb00276.x

10 Chang, L., Jacobson, T. L., & Zhang, W. (2013). A communicative action approach to evaluating citizen support for a government’s smoking policies. Journal of Communication, 63(6), 1153–1174. DOI: http://doi.org/10.1111/jcom.12065

11 Chou, Y. (2015). Actionable gamification: Beyond points, badges, and leaderboards. Fremont, CA: Octalysis Media.

12 Cleary, M. R. (2007). Electoral competition, participation, and government responsiveness in Mexico. American Journal of Political Science, 51(2), 283–299. DOI: http://doi.org/10.1111/j.1540-5907.2007.00251.x

13 Coelho, V. S. P., Pozzoni, B., & Montoya, M. C. (2005). Participation and public policies in Brazil. In J. Gastil & P. Levine (Eds.), The deliberative democracy handbook: Strategies for effective civic engagement in the twenty-first century (pp. 174–184). San Francisco, CA: Jossey-Bass.

14 Cohen, J. (1989). Deliberation and democratic legitimacy. In A. P. Hamlin & P. H. Pettit (Eds.), The good polity: Normative analysis of the state (pp. 17–34). New York: Basil Blackwell.

15 Coleman, S., & Moss, G. (2012). Under construction: The field of online deliberation research. Journal of Information Technology & Politics, 9(1), 1–15. DOI: http://doi.org/10.1080/19331681.2011.635957

16 Crosby, N., & Nethercutt, D. (2005). Citizens juries: Creating a trustworthy voice of the people. In J. Gastil & P. Levine (Eds.), The deliberative democracy handbook: Strategies for effective civic engagement in the twenty-first century (pp. 111–119). San Francisco, CA: Jossey-Bass.

17 Curato, N., & Böker, M. (2016). Linking mini-publics to the deliberative system: A research agenda. Policy Sciences, 49(2), 173–190. DOI: http://doi.org/10.1007/s11077-015-9238-5

18 Davies, T., & Chandler, R. (2012). Online deliberation design: Choices, criteria, and evidence. In T. Nabatchi, J. Gastil, M. Weiksner, & M. Leighninger (Eds.), Democracy in motion: Evaluating the practice and impact of deliberative civic engagement (pp. 103–133). New York: Oxford University Press.

19 Dekker, R., & Bekkers, V. (2015). The contingency of governments’ responsiveness to the virtual public sphere: A systematic literature review and meta-synthesis. Government Information Quarterly, 32(4), 496–505. DOI: http://doi.org/10.1016/j.giq.2015.09.007

20 Dillard, K. N. (2013). Envisioning the role of facilitation in public deliberation. Journal of Applied Communication Research, 41(3), 217–235. DOI: http://doi.org/10.1080/00909882.2013.826813

21 Dunne, K. (2010). Can online forums address political disengagement for local government? Journal of Information Technology & Politics, 7(4), 300–317. DOI: http://doi.org/10.1080/19331681.2010.491023

22 Dzur, A. W. (2008). Democratic professionalism: Citizen participation and the reconstruction of professional ethics, identity, and practice. University Park, PA: Pennsylvania State University Press.

23 Erikson, R. S., Wright, G. C., & McIver, J. P. (1993). Statehouse democracy: Public opinion and policy in the American states. New York: Cambridge University Press. DOI: http://doi.org/10.1017/CBO9780511752933

24 Esterling, K. M., Fung, A., & Lee, T. (2015). How much disagreement is good for democratic deliberation? Political Communication, 32(4), 529–551. DOI: http://doi.org/10.1080/10584609.2014.969466

25 Estlund, D. M. (2009). Democratic authority: A philosophical framework. Princeton, NJ: Princeton University Press. DOI: http://doi.org/10.1515/9781400831548

26 Fiorino, D. J. (1990). Citizen participation and environmental risk: A survey of institutional mechanisms. Science, Technology, & Human Values, 15(2), 226–243. DOI: http://doi.org/10.1177/016224399001500204

27 Fishkin, J. S. (2009). When the people speak: Deliberative democracy and public consultation. New York: Oxford University Press. DOI: http://doi.org/10.1093/oso/9780198820291.001.0001

28 Fishkin, J. S. (2018). Democracy when the people are thinking: Revitalizing our politics through public deliberation. New York: Oxford University Press.

29 Foa, R. S., & Mounk, Y. (2017). The signs of deconsolidation. Journal of Democracy, 28(1), 5–15. DOI: http://doi.org/10.1353/jod.2017.0000

30 Gastil, J. (1993). Identifying obstacles to small group democracy. Small Group Research, 24(1), 5–27. DOI: http://doi.org/10.1177/1046496493241002

31 Gastil, J. (2000). By popular demand: Revitalizing representative democracy through deliberative elections. Berkeley, CA: University of California Press.

32 Gastil, J. (2008). Political communication and deliberation. Thousand Oaks, CA: Sage. DOI: http://doi.org/10.4135/9781483329208

33 Gastil, J. (2014). Democracy in small groups: Participation, decision making, and communication (2nd ed.). State College, PA: Efficacy Press.

34 Gastil, J. (2016). Building a democracy machine: Toward an integrated and empowered form of civic engagement. Retrieved from Harvard Kennedy School of Government website: http://ash.harvard.edu/links/building-democracy-machine-toward-integrated-and-empowered-form-civic-engagement.

35 Gastil, J., Black, L. W., & Moscovitz, K. (2008). Ideology, attitude change, and deliberation in small face-to-face groups. Political Communication, 25(1), 23–46. DOI: http://doi.org/10.1080/10584600701807836

36 Gastil, J., Deess, E. P., Weiser, P. J., & Simmons, C. (2010). The jury and democracy: How jury deliberation promotes civic engagement and political participation. Oxford: Oxford University Press.

37 Gastil, J., Knobloch, K. R., & Richards, R. (2015). Empowering voters through better information: Analysis of the Citizens’ Initiative Review, 2010–2014: Report prepared for the Democracy Fund. Retrieved from Pennsylvania State University website: http://sites.psu.edu/citizensinitiativereview/wp-content/uploads/sites/23162/2015/05/CIR-2010-2014-Full-Report.pdf.

38 Gastil, J., & Levine, P. (Eds.). (2005). The deliberative democracy handbook: Strategies for effective civic engagement in the Twenty-First Century. San Francisco, CA: Jossey-Bass.

39 Gastil, J., & Richards, R. C. (2017). Embracing digital democracy: A call for building an online civic commons. PS: Political Science & Politics, 50(3), 758–763. DOI: http://doi.org/10.1017/S1049096517000555

40 Gastil, J., Richards, R., Ryan, M., & Smith, G. (2017). Testing assumptions in deliberative democratic design: A preliminary assessment of the efficacy of the Participedia data archive as an analytic tool. Journal of Public Deliberation, 13(2). DOI: http://doi.org/10.16997/jdd.277

41 Gastil, J., & Xenos, M. (2010). Of attitudes and engagement: Clarifying the reciprocal relationship between civic attitudes and political participation. Journal of Communication, 60, 218–243. DOI: http://doi.org/10.1111/j.1460-2466.2010.01484.x

42 Geenans, R. (2007). The deliberative model of democracy: Two critical remarks. Ratio Juris, 20, 355–377. DOI: http://doi.org/10.1111/j.1467-9337.2007.00365.x

43 Gilman, H. R. (2016). Democracy reinvented: Participatory budgeting and civic innovation in America. Washington, DC: Brookings Institution Press.

44 Gutmann, A., & Thompson, D. (2004). Why deliberative democracy? Princeton, NJ: Princeton University Press. DOI: http://doi.org/10.1515/9781400826339

45 Habermas, J. (1975). Legitimation crisis (T. A. McCarthy, Trans.). Boston, MA: Beacon Press.

46 He, B., & Warren, M. E. (2012). Authoritarian deliberation: The deliberative turn in Chinese political development. Perspectives on Politics, 9(2), 269–289. DOI: http://doi.org/10.1017/S1537592711000892

47 Henderson, H. (1970). Computers: Hardware of democracy. Forum, 70(2), 22–24, 46–51. DOI: http://doi.org/10.35765/forphil.1997.0201.5

48 Hendriks, C. M. (2005). Consensus conferences and planning cells: Lay citizen deliberations. In J. Gastil & P. Levine (Eds.), The deliberative democracy handbook: Strategies for effective civic engagement in the twenty-first century (pp. 80–110). San Francisco, CA: Jossey-Bass.

49 Hobolt, S. B., & Klemmensen, R. (2008). Government responsiveness and political competition in comparative perspective. Comparative Political Studies, 41(3), 309–337. DOI: http://doi.org/10.1177/0010414006297169

50 Ingham, S. (2013). Disagreement and epistemic arguments for democracy. Politics, Philosophy & Economics, 12(2), 136–155. DOI: http://doi.org/10.1177/1470594X12460642

51 Johnson, C., & Gastil, J. (2015). Variations of institutional design for empowered deliberation. Journal of Public Deliberation, 11(1). DOI: http://doi.org/10.16997/jdd.219

52 Karpowitz, C. F., & Raphael, C. (2014). Deliberation, democracy, and civic forums: Improving equality and publicity. New York: Cambridge University Press. DOI: http://doi.org/10.1017/CBO9781107110212

53 Kelshaw, T., & Gastil, J. (2008). When citizens and officeholders meet part 2: A typology of face-to-face public meetings. International Journal of Public Participation, 2(1), 33–54.

54 Knobloch, K. R., & Gastil, J. (2012, November). Civic (re)socialization: The educative effects of deliberative participation. Paper presented at the Ninety-Eighth Annual Convention of the National Communication Association, Orlando, FL.

55 Knobloch, K. R., Gastil, J., Reedy, J., & Cramer Walsh, K. (2013). Did they deliberate? Applying an evaluative model of democratic deliberation to the Oregon Citizens’ Initiative Review. Journal of Applied Communication Research, 41(2), 105–125. DOI: http://doi.org/10.1080/00909882.2012.760746

56 Landemore, H. (2013). Democratic reason: Politics, collective intelligence, and the rule of the many. Princeton, NJ: Princeton University Press. DOI: http://doi.org/10.1515/9781400845538

57 Lee, C. W. (2014). Do-it-yourself democracy: The rise of the public engagement industry. New York: Oxford University Press. DOI: http://doi.org/10.1093/acprof:oso/9780199987269.001.0001

58 Lee, J. Y. (2015). Trust and social commerce. University of Pittsburgh Law Review, 77(2), 137. DOI: http://doi.org/10.5195/LAWREVIEW.2015.395

59 Leib, E. J., & He, B. (2006). The search for deliberative democracy in China. New York: Palgrave Macmillan. DOI: http://doi.org/10.1057/9780312376154

60 Lerner, J. (2014). Making democracy fun: How game design can empower citizens and transform politics. Cambridge, MA: MIT Press. DOI: http://doi.org/10.7551/mitpress/9785.001.0001

61 Levi, M., Sacks, A., & Tyler, T. (2009). Conceptualizing legitimacy, measuring legitimating beliefs. American Behavioral Scientist, 53(3), 354–375. DOI: http://doi.org/10.1177/0002764209338797

62 Mansbridge, J. J., Hartz-Karp, J., Amengual, M., & Gastil, J. (2006). Norms of deliberation: An inductive study. Journal of Public Deliberation, 2(1). DOI: http://doi.org/10.16997/jdd.35

63 Mathews, D. (1999). Politics for people: Finding a responsible public voice (2nd ed.). Champaign, IL: University of Illinois Press.

64 Matsusaka, J. G. (2004). For the many or the few: The initiative, public policy, and American democracy. Chicago, IL: University of Chicago Press. DOI: http://doi.org/10.7208/chicago/9780226510873.001.0001

65 Muhlberger, P., & Weber, L. M. (2006). Lessons from the Virtual Agora Project: The effects of agency, identity, information, and deliberation on political knowledge. Journal of Public Deliberation, 2(1), Article 13. DOI: http://doi.org/10.16997/jdd.37

66 Nabatchi, T., Gastil, J., Weiksner, M., & Leighninger, M. (Eds.). (2012). Democracy in motion: Evaluating the practice and impact of deliberative civic engagement. New York: Oxford University Press. DOI: http://doi.org/10.1093/acprof:oso/9780199899265.001.0001

67 Neblo, M. A. (2015). Deliberative democracy between theory and practice. New York: Cambridge University Press. DOI: http://doi.org/10.1017/CBO9781139226592

68 Niemi, R. G., Craig, S. C., & Mattei, F. (1991). Measuring internal political efficacy in the 1988 National Election Study. American Political Science Review, 85, 1407–1413. DOI: http://doi.org/10.2307/1963953

69 Noveck, B. S. (2018). Crowdlaw: Collective intelligence and lawmaking. Analyse & Kritik, 40(2), 359–380. DOI: http://doi.org/10.1515/auk-2018-0020

70 Parkinson, J., & Mansbridge, J. (Eds.). (2012). Deliberative systems: Deliberative democracy at the large scale. New York: Cambridge University Press. DOI: http://doi.org/10.1017/CBO9781139178914

71 Patel, M., Sotsky, J., Gourley, S., & Houghton, D. (2013). The emergence of civic tech: Investments in a growing field. Miami, FL: The Knight Foundation.

72 Paul, S., Haseman, W. D., & Ramamurthy, K. (2004). Collective memory support and cognitive-conflict group decision-making: An experimental investigation. Decision Support Systems, 36(3), 261–281. DOI: http://doi.org/10.1016/S0167-9236(02)00144-6

73 Peña-López, I. (2017a). Citizen participation and the rise of the open source city in Spain. IT for Change. Retrieved from https://itforchange.net/mavc/wp-content/uploads/2017/06/Research-Brief-Spain.pdf.

74 Peña-López, I. (2017b). Voice or chatter? Case studies. IT for Change. Retrieved from https://itforchange.net/voice-or-chatter-making-icts-work-for-transformative-citizen-engagement.

75 Peterson, R. S., Owens, P. D., Tetlock, P. E., Fan, E. T., & Martorana, P. (1998). Group dynamics in top management teams: Groupthink, vigilance, and alternative models of organizational failure and success. Organizational Behavior and Human Decision Processes, 73(2), 272–305. DOI: http://doi.org/10.1006/obhd.1998.2763

76 Richards, R. C., & Gastil, J. (2015). Symbolic-cognitive proceduralism: A model of deliberative legitimacy. Journal of Public Deliberation, 11(2), Article 3.

77 Rodrik, D., & Zeckhauser, R. (1988). The dilemma of government responsiveness. Journal of Policy Analysis and Management, 7(4), 601–620. DOI: http://doi.org/10.2307/3323483

78 Rufín, R., Bélanger, F., Molina, C. M., Carter, L., & Figueroa, J. C. S. (2014). A cross-cultural comparison of electronic government adoption in Spain and the USA. International Journal of Electronic Government Research, 10(2), 43–59. DOI: http://doi.org/10.4018/ijegr.2014040104

79 Schwartzberg, M. (2015). Epistemic democracy and its challenges. Annual Review of Political Science, 18(1), 187–203. DOI: http://doi.org/10.1146/annurev-polisci-110113-121908

80 Showers, E., Tindall, N., & Davies, T. (2015). Equality of participation online versus face to face: An analysis of the community forum deliberative methods demonstration (SSRN Scholarly Paper No. ID 2616233). Retrieved from Social Science Research Network website: https://papers.ssrn.com/abstract=2616233. DOI: http://doi.org/10.2139/ssrn.2616233

81 Simon, J., Bass, T., Boelman, V., & Mulgan, G. (2017). Digital democracy: The tools transforming political engagement. UK, England and Wales: NESTA.

82 Smith, A. (2018, September 3). Digital platforms for urban democracy? Paper presented at the Toward a Platform Urbanism Agenda for Urban Studies, University of Sheffield, UK.

83 Smith, G., & Wales, C. (2000). Citizens’ juries and deliberative democracy. Political Studies, 48(1), 51–65. DOI: http://doi.org/10.1111/1467-9248.00250

84 Steenbergen, M. R., Bächtiger, A., Spörndli, M., & Steiner, J. (2003). Measuring political deliberation: A discourse quality index. Comparative European Politics, 1(1), 21–48. DOI: http://doi.org/10.1057/palgrave.cep.6110002

85 Sullivan, B., & Hartz-Karp, J. (2013). Grafting an online parliament onto a face-to-face process. In L. Carson, J. Gastil, J. Hartz-Karp, & R. Lubensky (Eds.), The Australian Citizens’ Parliament and the future of deliberative democracy (pp. 49–62). University Park, PA: Penn State University Press.

86 Sunstein, C. R. (2017). #Republic: Divided Democracy in the Age of Social Media. Princeton, NJ: Princeton University Press. DOI: http://doi.org/10.1515/9781400884711

87 Tetlock, P. E., Mellers, B. A., Rohrbaugh, N., & Chen, E. (2014). Forecasting tournaments: Tools for increasing transparency and improving the quality of debate. Current Directions in Psychological Science, 23(4), 290–295. DOI: http://doi.org/10.1177/0963721414534257

88 Thierer, A., Koopman, C., Hobson, A., & Kuiper, C. (2015). How the Internet, the sharing economy, and reputational feedback mechanisms solve the lemons problem. University of Miami Law Review, 70(3), 830–878. DOI: http://doi.org/10.2139/ssrn.2610255

89 Wampler, B. (2007). Participatory budgeting in Brazil: Contestation, cooperation, and accountability. University Park, PA: Pennsylvania State University Press.

90 Weinmann, C. (2018). Measuring political thinking: Development and validation of a scale for ‘deliberation within.’ Political Psychology, 39(2), 365–380. DOI: http://doi.org/10.1111/pops.12423

91 Yankelovich, D. (1991). Coming to public judgment: Making democracy work in a complex world. Syracuse, NY: Syracuse University Press.

92 Young, I. M. (2002). Inclusion and democracy. New York: Oxford University Press. DOI: http://doi.org/10.1093/0198297556.001.0001

93 Zhang, W., & Chang, L. (2014). Perceived speech conditions and disagreement of everyday talk: A proceduralist perspective of citizen deliberation. Communication Theory, 24(2), 124–145. DOI: http://doi.org/10.1111/comt.12034

94 Zornoza, A., Ripoll, P., & Peiro, J. M. (2002). Conflict management in groups that work in two different communication contexts: Face-to-face and computer-mediated communication. Small Group Research, 33(5), 481–508. DOI: http://doi.org/10.1177/104649602237167